Bases:

airflow.sensors.base_sensor_operator.BaseSensorOperatorWaits for a Python callable to return True.

User could put input argument in templates_dicte.g

templates_dict={'start_ds':1970}and access the argument by calling kwargs['templates_dict']['start_ds']in the the callable

- python_callable (python callable) – A reference to an object that is callable

- op_kwargs (dict) – a dictionary of keyword arguments that will get unpackedin your function

- op_args (list) – a list of positional arguments that will get unpacked whencalling your callable

- provide_context (bool) – if set to true, Airflow will pass a set ofkeyword arguments that can be used in your function. This set ofkwargs correspond exactly to what you can use in your jinjatemplates. For this to work, you need to define **kwargs in yourfunction header.

- templates_dict (dict of str) – a dictionary where the values are templates thatwill get templated by the Airflow engine sometime between

__init__andexecutetakes place and are made availablein your callable’s context after the template has been applied.

The templatesdict argument is templated, so each value in the dictionary is evaluated as a Jinja template. Airflow.operators.pythonoperator, It derives the PythonOperator and expects a Python function that returns a single taskid or list of taskids to follow. The taskid(s) returned should point to a task class airflow.operators.python.

template_fields= ['templates_dict'][source]¶

- See the License for the # specific language governing permissions and limitations # under the License. From typing import Callable, Dict, List, Optional from airflow.sensors.base import BaseSensorOperator from airflow.utils.decorators import applydefaults from airflow.utils.operatorhelpers import determinekwargs.

- Templates and Macros in Apache Airflow are the way to pass dynamic data to your DAGs at runtime. Let’s imagine that you would like to execute a SQL request using the execution date of your DAG? How can you do that? How could you use the DAG id of your DAG in your bash script to generate data?

- Templatesdict (dict of str) – a dictionary where the values are templates that will get templated by the Airflow engine sometime between init and execute takes place and are made available in your callable’s context after the template has been applied. Templatefields = 'templatesdict'.

- For this to work, you need to define `.kwargs` in your function header.:type providecontext: bool:param templatesdict: a dictionary where the values are templates that will get templated by the Airflow engine sometime between ``init`` and ``execute`` takes place and are made available in your callable's context after the template has.

poke(self, context)[source]¶

Data & AI Training Guide 2021

Download the GoDataDriven brochure for a complete overview of available training sessions and data engineering, data science, data analyst and analytics translator learning journeys.

The Airflow experimental api allows you to trigger a DAG over HTTP. This comes in handy if you are integrating with cloud storage such Azure Blob store.

Because although Airflow has the concept of Sensors, an external trigger will allow you to avoid polling for a file to appear.

In this blog post, I will show how we use Azure Functions to trigger a DAG when a file is uploaded to a Azure Blob Store.

Because although Airflow has the concept of Sensors, an external trigger will allow you to avoid polling for a file to appear.

In this blog post, I will show how we use Azure Functions to trigger a DAG when a file is uploaded to a Azure Blob Store.

Experimental API

The experimental API allows you to fetch information regarding dags and tasks, but also trigger and even delete a DAG. In this blog post we will use it to trigger a DAG.

By default the experimental API is unsecured, and hence before we continue we should define an

There are multiple options available, in this blogpost we use the

By default the experimental API is unsecured, and hence before we continue we should define an

auth_backend which secures it.There are multiple options available, in this blogpost we use the

password_auth backend which implements HTTP Basic Authentication. Something you should only use over HTTPS.This is a provider package for jira provider. All classes for this provider package are in airflow.providers.jira python package. Atlassian Jira Project Management Software (v8.3.4#803005-sha1:1f96e09) About Jira; Report a problem; Powered by a free Atlassian Jira open source license for Apache Software Foundation. Try Jira - bug tracking software for your team. Airflow jira. The airflow scheduler executes your tasks on an array of workers while following the specified dependencies. Rich command line utilities make performing complex surgeries on DAGs a snap. The rich user interface makes it easy to visualize pipelines running in production, monitor progress, and troubleshoot issues when needed.

Enabling the

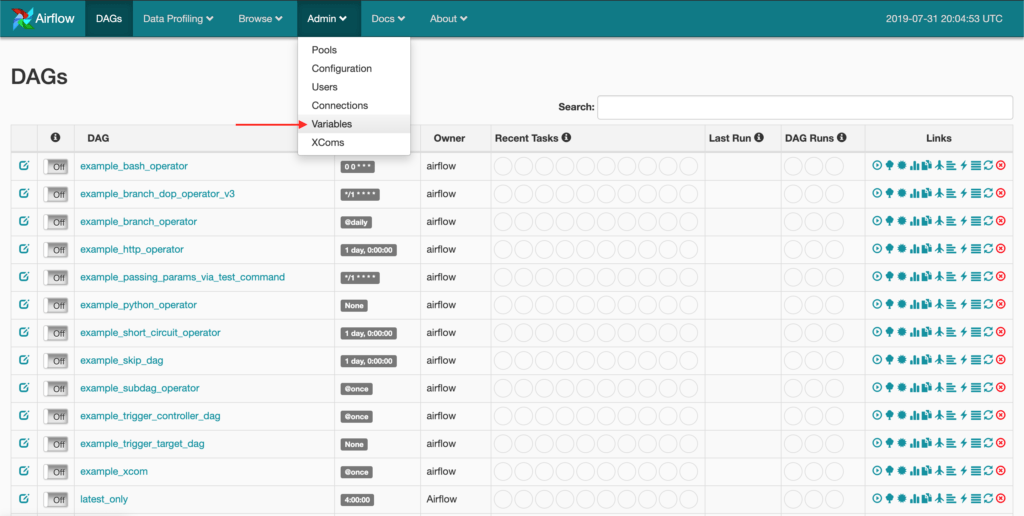

password_auth backend is a small change to your Airflow config file:Next, use the Airflow web interface to create a new user to be used by the Azure Function to trigger the DAG.

Azure Functions

Azure allows you to define small snippets of code which can be triggered by a whole range of other Auzre products. Examples are being triggered by a message on an EventHub, or in this case a file appearing on a Blob Store.

There are a couple of different languages to choose from, and in this case I was a bit lazy and went for JavaScript in the BlobTrigger wizard.

There are a couple of different languages to choose from, and in this case I was a bit lazy and went for JavaScript in the BlobTrigger wizard.

You need to link a storage account, and define the path you want to monitor. In my case I configured it to monitor

my-data/{name}, where my-data is the name of the container within the storage account.Next, you're presented with a small example. I've extending their example a bit which resulted in the following piece of code:

You need to adjust the

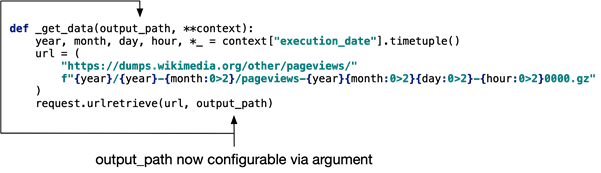

The nice thing here is that I'm actually passing the filename of the new file to Airflow, which I can use in the DAG lateron.

AIRFLOW_URL, DAG_NAME, AIRFLOW_USER, and AIRFLOW_PASSWORD.The nice thing here is that I'm actually passing the filename of the new file to Airflow, which I can use in the DAG lateron.

Airflow DAG

The full Airflow DAG itself I won't post, but in the excerpt below I show how to use the

filename in the DAG.Concluding

So in this blog post I've shown how to use an Azure Function to trigger an Airflow DAG using the experimental API.

I very much like the fact that it removes the need of polling for a file, and it allows us to integrate Airflow nicely in an otherwise cloud native setup.

I very much like the fact that it removes the need of polling for a file, and it allows us to integrate Airflow nicely in an otherwise cloud native setup.

Want to improve your Apache Airflow skills?

Airflow Python Operator Templates Dict

Did you know we offer an Apache Airflow course

to teach you the internals, terminology, and best practices of working with Airflow, with hands-on experience in writing an maintaining data pipelines?

to teach you the internals, terminology, and best practices of working with Airflow, with hands-on experience in writing an maintaining data pipelines?